Ever Wanted to Turn Off an AI Video the Moment It Started Speaking?

If you run ads, manage a brand, or produce social content, you’ve probably tried AI-generated UGC videos.

The visuals look good.

The lighting is clean.

The composition works.

But the moment the “Cantonese” starts…

The tone feels off.

The pauses sound unnatural.

The pronunciation doesn’t feel local.

It sounds like a robot reading translated text.

And for Hong Kong audiences?

That instantly kills trust.

When you're marketing to Cantonese-speaking consumers, voice authenticity directly impacts conversion rate. If it doesn’t sound real, it doesn’t sell.

Recently, I tested KLING 3.0 OMNI to see whether AI-generated Cantonese UGC could finally pass the “local ear test.”

Surprisingly?

It did.

In this post, I’ll break down:

- Why previous AI tools struggled with Cantonese

- What KLING 3.0 OMNI does differently

- The exact prompt I used

- How to turn one product image into a UGC-style short video

- How to integrate this into an automated marketing workflow

If you’re running DTC, beverage, beauty, or TikTok/Reels ads in Hong Kong — this matters.

Why Most AI Tools Fail at Cantonese UGC

1️⃣ Cantonese Is Tonal and Contextual

Most AI video tools prioritize:

- English

- Mandarin

- Lip-sync accuracy

- Cinematic realism

But Cantonese has:

- 6–9 tones depending on classification

- Sentence-ending particles (laa, wor, maa, ge)

- Casual spoken contractions

- Cultural phrasing that differs from written Chinese

Many tools “translate” into Cantonese text — but they don’t reproduce natural spoken rhythm.

2️⃣ UGC ≠ Commercial Voiceover

UGC has a specific energy:

- Slight hesitation

- Natural hand movement

- Conversational tone

- Lifestyle vibe

Previous tools produced something that felt like a scripted advertisement — not a genuine user sharing experience.

And UGC works because it feels human.

Pull Quote:

“For Cantonese UGC, sounding human matters more than sounding clear.”

How I Used KLING 3.0 OMNI

The process was simple.

Step 1: Upload the Product Image

No complex modeling required.

Important details:

- Product proportions remained accurate

- Logo stayed undistorted

- Lighting looked realistic

- Natural hand interaction

Step 2: Use This Prompt

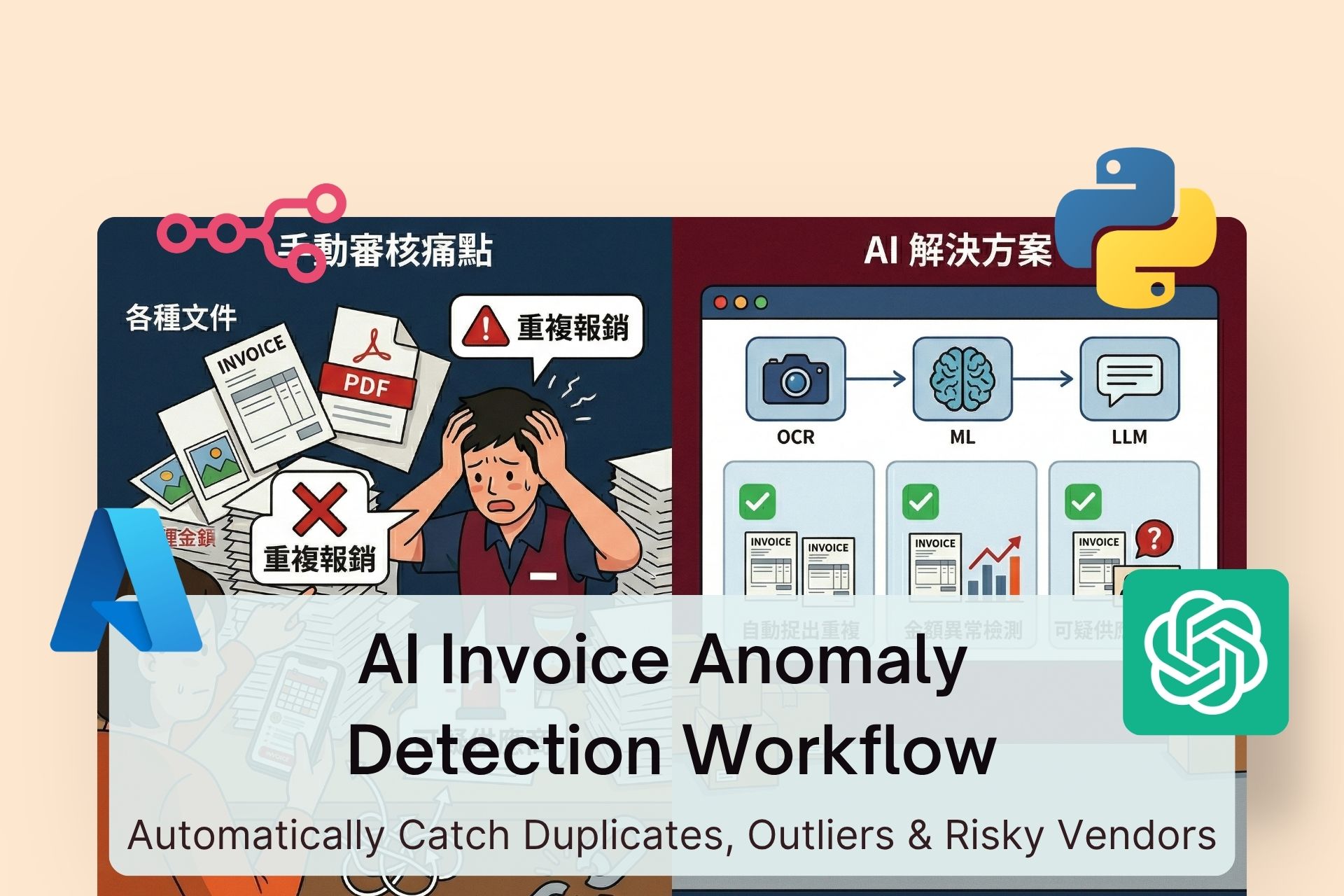

Refer to the @image_1 and generate a UGC-style short video.

Show a natural user-sharing scenario with a person speaking Cantonese to introduce the drink.

Keep the style authentic, casual, and lifestyle-driven, similar to a smartphone selfie or social media post.

Ensure the product appearance, logo, and proportions remain accurate, with realistic lighting and natural hand interaction.

Maintain a friendly, relatable tone while keeping commercial-grade visual quality.

Step 3: Generate

The results stood out in several ways:

✅ Natural Cantonese rhythm

✅ Correct tonal delivery

✅ Casual lifestyle energy

✅ Realistic lighting

✅ Authentic product interaction

It didn’t feel like a news anchor.

It felt like a friend recording a story.

[IMAGE: Before vs After Cantonese UGC Comparison]

Turning This Into a Scalable AI UGC System

Creating one video is helpful.

Creating 20 variations per week is powerful.

If you're testing ads, you need volume:

- 3 hooks per product

- 3 tone variations

- 2 call-to-action styles

- Multiple audience angles

That’s where workflow automation comes in.

AI UGC Workflow Blueprint

🔹 Step 1: Generate Cantonese Scripts (ChatGPT)

Create:

- 3-second hooks

- Pain-point openings

- Social proof variations

- Multiple CTAs

🔹 Step 2: Generate Videos (KLING 3.0 OMNI)

- Upload product image

- Apply structured prompt

- Batch generate variations

🔹 Step 3: Automate Asset Management

Using tools like Make or Zapier:

- Store outputs automatically

- Organize versions by campaign

- Sync into ad testing folders

Now you're not just generating videos — you're building a production system.

Real Application: Beverage Brand Example

Let’s say you're selling a low-sugar sparkling tea.

Instead of filming 10 influencers, you:

- Write 3 Cantonese script angles:

- Office lifestyle

- Health-conscious switch

- Casual daily refreshment

- Generate 3 emotional tones:

- Excited recommendation

- Relaxed daily habit

- Playful sharing

- Produce 9 UGC variations instantly.

Now you can test which emotional angle drives higher CTR and conversion.

This dramatically reduces production cost while increasing creative velocity.

How to Implement This Yourself (Step-by-Step)

- Prepare a high-resolution product image

- Write 3 Cantonese UGC scripts

- Use KLING 3.0 OMNI with structured prompts

- Generate 6–9 variations

- Run small-budget ad tests

- Scale the winning creative

Pro Tip: Test emotional tone before editing style.

Conclusion

AI-generated UGC isn’t new.

But AI-generated Cantonese UGC that actually sounds local?

That’s new.

For Hong Kong brands, language authenticity directly impacts trust — and trust impacts sales.

KLING 3.0 OMNI doesn’t just solve a technical limitation.

It solves a cultural one.

And when you're scaling paid ads, that difference compounds quickly.