Compliance Shouldn’t Be a “Manual Document Hunt”

Every time a client sends a security questionnaire…

Every time you prepare for ISO internal review…

Every time someone asks, “Are we GDPR compliant?”

Does your company end up doing this?

- IT, HR, and Ops scrambling through folders

- Endless “Did we write this anywhere?”

- Updating one policy… then re-checking everything again

You’re not failing at compliance.

You’re missing a repeatable, traceable, automated compliance workflow.

The real time drain isn’t writing explanations.

It’s:

- Mapping policies to regulations

- Searching for evidence

- Identifying gaps

- Keeping documentation consistent

- Repeating the same audit prep every quarter

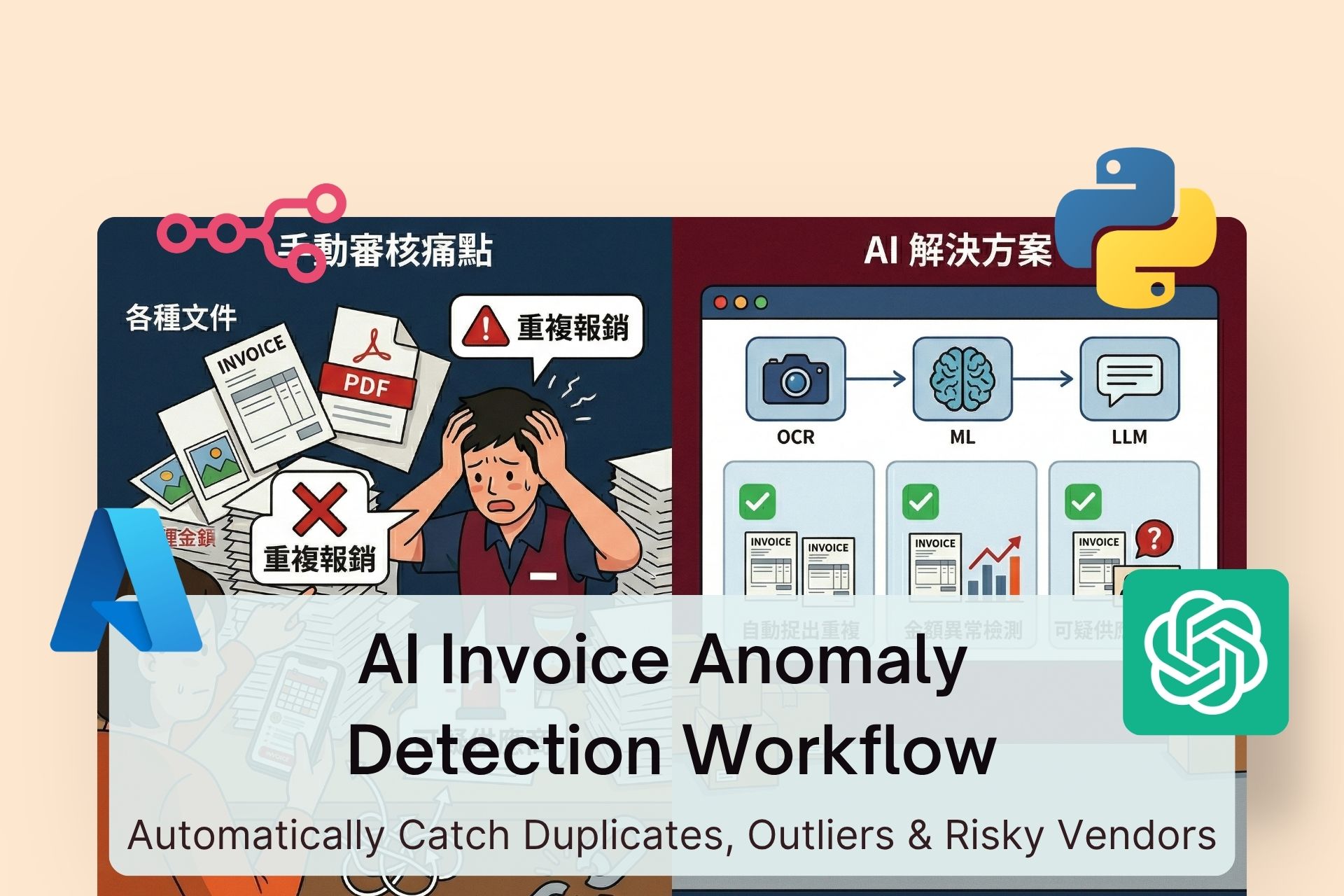

With the right architecture — RAG + Rule Engine + LLM — you can transform compliance from a manual nightmare into a structured automation pipeline:

Upload documents → Auto-parse → Compare against GDPR / ISO / ESG → Detect gaps → Generate dashboard reports

In this guide, you’ll learn:

- The complete system architecture

- Tech stack comparisons

- When to use rule-based logic vs LLM reasoning

- Privacy and risk considerations

- Copy-and-paste prompt templates

You can use this to build your MVP immediately.

1. Complete System Architecture

The workflow can be broken into seven layers.

1️⃣ Document Upload Layer

Goal: Centralized intake + access control + version tracking

Supported inputs:

- DOCX

- SOPs

- Contracts

- Evidence files (screenshots, logs)

Key design considerations:

- Document classification (Policy / SOP / Contract / Evidence)

- Versioning (v1.0, v1.1)

- Owner tagging

- Sensitivity labels (Internal / Restricted)

Without structured metadata, everything downstream becomes chaotic.

2️⃣ Parsing Layer

Convert documents into structured, searchable, citeable text.

Required capabilities:

- OCR for scanned PDFs

- Heading hierarchy detection

- Semantic chunking (not fixed token splits)

- Page and paragraph IDs retained

Recommended output structure:

chunk_id

source

page

section

text

metadata

If you can’t cite evidence, your LLM shouldn’t be making compliance judgments.

3️⃣ Vector Database (Semantic Retrieval Layer)

Used to power RAG (Retrieval-Augmented Generation).

Common options:

Pinecone

- Fully managed

- High stability

- Easy scaling

Weaviate

- Open-source

- Self-hostable

- Advanced features

Supabase Vector (pgvector)

- Cost-effective

- Integrates with Postgres

- Easy audit logging

For most mid-sized companies building an MVP, Supabase is often sufficient.

4️⃣ RAG Query Flow

Workflow:

- Convert a GDPR / ISO / ESG requirement into a search query

- Retrieve top-k relevant chunks

- Pass evidence + citations to the LLM

- Force the LLM to answer strictly based on provided evidence

The goal isn’t to eliminate hallucination completely —

it’s to drastically reduce it.

5️⃣ Rule Engine (Hard Logic Layer)

Use rule-based checks for:

- Required sections missing

- Data retention period not specified

- Incident reporting deadlines not defined

- Access review documentation absent

Advantages:

- Deterministic

- Low cost

- Consistent

Rule engine checks should run before the LLM.

6️⃣ LLM Analysis Layer

Best suited for:

- Interpreting whether policy language satisfies regulatory intent

- Detecting contradictions between documents

- Writing risk explanations

- Suggesting remediation steps

Critical principle:

The LLM must only reason based on retrieved evidence.

All outputs should be structured as JSON.

7️⃣ Dashboard & Reporting Layer

Output should include:

- Compliance score per control

- Gap list

- Risk severity

- Evidence citations

- Version comparisons

The most valuable output isn’t the score.

It’s the prioritized remediation list.

2. Tech Stack Comparison

OpenAI Embeddings vs BGE

OpenAI Embeddings

- Stable and high-quality

- Strong multilingual support

- Fast deployment

- Requires cloud data handling considerations

BGE (Self-hosted)

- On-prem deployment possible

- Strong privacy control

- Requires infrastructure and tuning

If handling highly sensitive internal documents, consider on-prem embeddings.

n8n vs Make (Automation Orchestration)

n8n

- Open-source

- Self-hostable

- Suitable for internal network deployments

Make

- Easy UI

- Rapid MVP building

- SaaS-based

Need enterprise control → n8n

Need speed and experimentation → Make

When to Use Rule-Based vs LLM

Task TypeRule EngineLLMRequired section check✅❌Regulatory intent interpretation❌✅Cross-document contradiction❌✅Field validation✅❌

Golden principle:

Rule engine ensures consistency. LLM provides reasoning.

3. Risk & Compliance Considerations

1️⃣ LLM Is Not Legal Advice

Your system should be positioned as:

- Internal self-assessment tool

- Audit preparation support

Final sign-off must come from legal counsel or a DPO.

2️⃣ Data Privacy Safeguards

Minimum requirements:

- TLS encryption in transit

- Encryption at rest

- Role-based access control (RBAC)

- Audit logging

3️⃣ Internal Document Protection

Recommended safeguards:

- KMS-managed encryption keys

- Separate keys per tenant

- PII tokenization

- Least privilege access control

4. Copy-and-Paste Prompt Templates

Below are production-ready prompts.

✅ Gap Detection Prompt

You are a compliance analyst.

You may only evaluate based on the provided evidence.

Do not use external knowledge or assumptions.

Objective:

Assess whether the company documents cover the specified Requirement.

Identify all compliance gaps.

If evidence is insufficient, set insufficient_evidence=true.

Requirement:

<Insert GDPR / ISO / ESG requirement text>

Evidence:

- [chunk_id: xxx | source: filename | page: P | text: "..."]

- [chunk_id: xxx | source: filename | page: P | text: "..."]

Output JSON:

{

"covered": true/false,

"insufficient_evidence": true/false,

"gaps": [

{

"gap": "...",

"risk_level": "low/medium/high",

"why": "...",

"missing_evidence": "..."

}

],

"supporting_citations": ["chunk_id:..."]

}

✅ Compliance Scoring Prompt

You are a pre-audit self-assessment tool.

Score the control from 0–5:

0 = Not addressed

1 = Mentioned but no process

2 = Process exists but no evidence

3 = Evidence exists but inconsistent

4 = Strong implementation, minor improvements needed

5 = Fully implemented and continuously monitored

Control:

<Insert control description>

Evidence:

- [chunk_id: xxx | text: "..."]

Output JSON:

{

"score_0_to_5": 0,

"score_reason": "...",

"what_to_improve_next": ["...", "..."],

"supporting_citations": ["chunk_id:..."],

"confidence_0_to_1": 0.0

}

✅ Risk Explanation Prompt

You are a compliance risk advisor.

Explain in business terms what risks arise if the issue is not remediated.

Provide actionable remediation steps.

You must only reference provided evidence.

If evidence is insufficient, state that clearly.

Issue:

<Insert gap description>

Evidence:

- [chunk_id: xxx | text: "..."]

Output JSON:

{

"risk_story": "...",

"likely_impact": ["Regulatory Risk","Client Risk","Operational Risk"],

"recommended_actions": [

{

"action": "...",

"owner_role": "...",

"effort": "S/M/L",

"expected_days": 0

}

],

"supporting_citations": ["chunk_id:..."]

}

5. How to Build Your MVP

Recommended order:

- Select 20 high-impact controls first

- Build parsing + citation infrastructure

- Implement rule engine checks

- Add RAG + LLM reasoning

- Output simple structured reports (CSV or dashboard)

Do not start with the full ISO framework.

Start focused.

Conclusion

AI compliance automation is not about replacing people.

It’s about:

- Speeding up reviews

- Standardizing evaluations

- Reducing repetitive audit prep

- Increasing traceability

If you clearly separate:

Rule Engine + RAG + LLM responsibilities,

you transform compliance from chaos into a structured, repeatable workflow.